By the time I saw the GPT Image 2 Model announcement, my prompt folder was already open in the other window. I'd been living in it for two posts. That Tuesday I was about to run Imagen 4 Fast against the brand kit studies from Part 2. I'd even built the exhibit for it while learning headless CMS on the side. Then OpenAI shipped gpt-image-2 earlier that afternoon. I couldn't resist. I tacked the gpt-image-2 generations onto the Imagen run and ran the two side by side.

I've come to like Imagen 4 Fast over the series. Quality per dollar, hard to beat. What surprised me was gpt-image-2 at low. A full brand kit at $0.72 versus Imagen's $2.24, flexing hard on composition quality at 60% of Imagen's per-image cost.

The press cycle went straight to the top tier, which is fair. That's where the cinematic demos live. I went the other direction. I wanted to know if the cheapest tier, low, would run the prompts I'd spent Part 1 and Part 2 tuning.

They did. All 88. Zero rewrites. No regens. The same canonical prompts that carried gpt-image-1-mini and fought Imagen ported to gpt-image-2 first try.

The result was cleaner than I expected, and it changed how I think about prompt aging.

why the first question on a new model isn't "is it better"

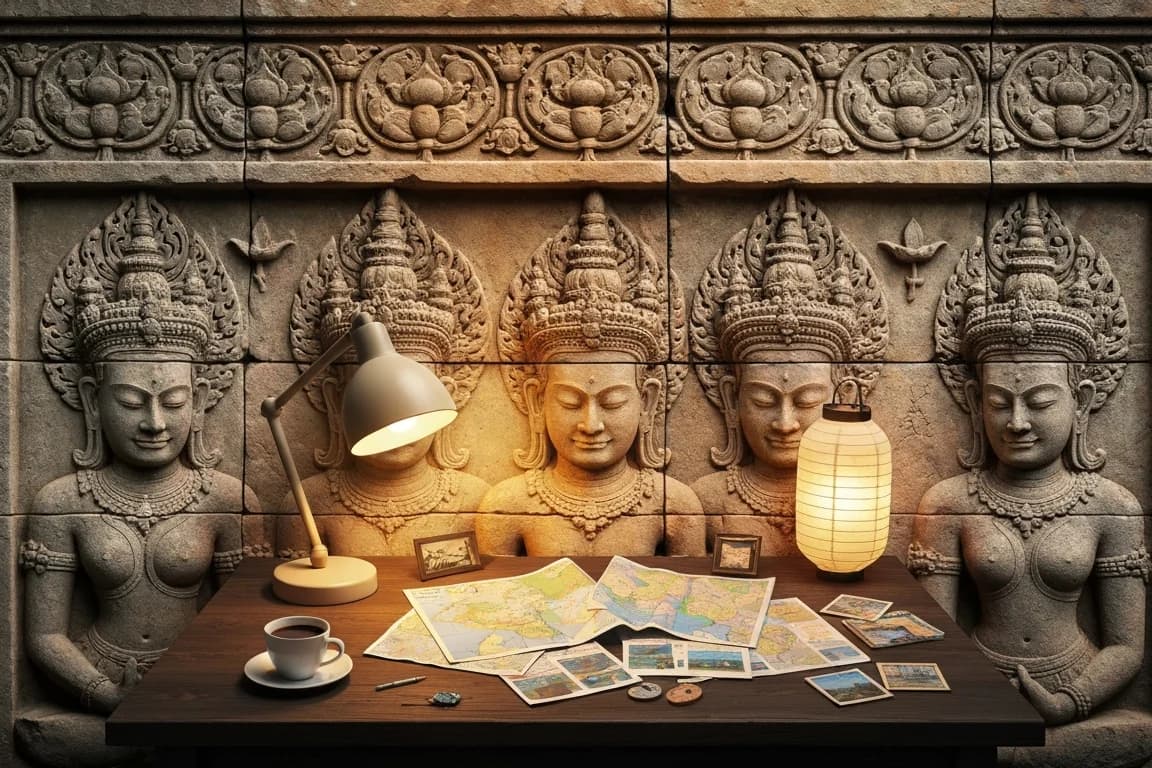

In Part 2 I got halfway through the Khmer run before I thought to try the same prompts on Imagen. That detour taught me more than all of Part 1 combined. Same prompt. gpt-image-1-mini handed back a fully carved sandstone panel, desk and all. Imagen handed back a stone-carved frame wrapped around a photograph of a real desk. Same quality tier. Different grammar entirely. Rewriting the prompt with noun pre-qualification on every Scene token (carved stone lamp, sandstone map scrolls) fixed it. The same model, with the right grammar, rendered the full panel too.

That detour gave me the useful classification: models sort into grammar buckets. The mini bucket reads "the entire scene is carved" holistically and renders every noun in the target medium. The Imagen bucket reads Scene nouns as literal render targets and treats Style as a filter applied on top. Two rendering modes, direct synthesis versus photographic fallback, and a prompt written for one mode slips on the other.

So when a new model ships, the first thing I check isn't is it better. It's which bucket does it land in. Answer one way and my two posts' worth of methodology compounds. Answer the other way and I'm rewriting prompts again.

At these prices, I could answer it before the curiosity cooled.

the $0.07 bucket test

The Khmer heroes were the fastest way to answer the bucket question. Four prompts, four mediums I'd already catalogued as hard cases. Mini bucket would hold all four. Imagen bucket would lose at least one. I queued up the canonical v1 SALT prompts, the same ones Imagen had failed on. No rewrites.

angkor-relief/hero: the desk rendered as a single weathered sandstone bas-relief panel, figures carved into the stone around the lamp.khmer-lacquer/hero: the entire scene on deep black lacquer with gold leaf linework.sbek-thom/hero: every object as perforated leather silhouette, backlit.khmer-ikat/hero: the whole image as woven thread, including the laptop screen.

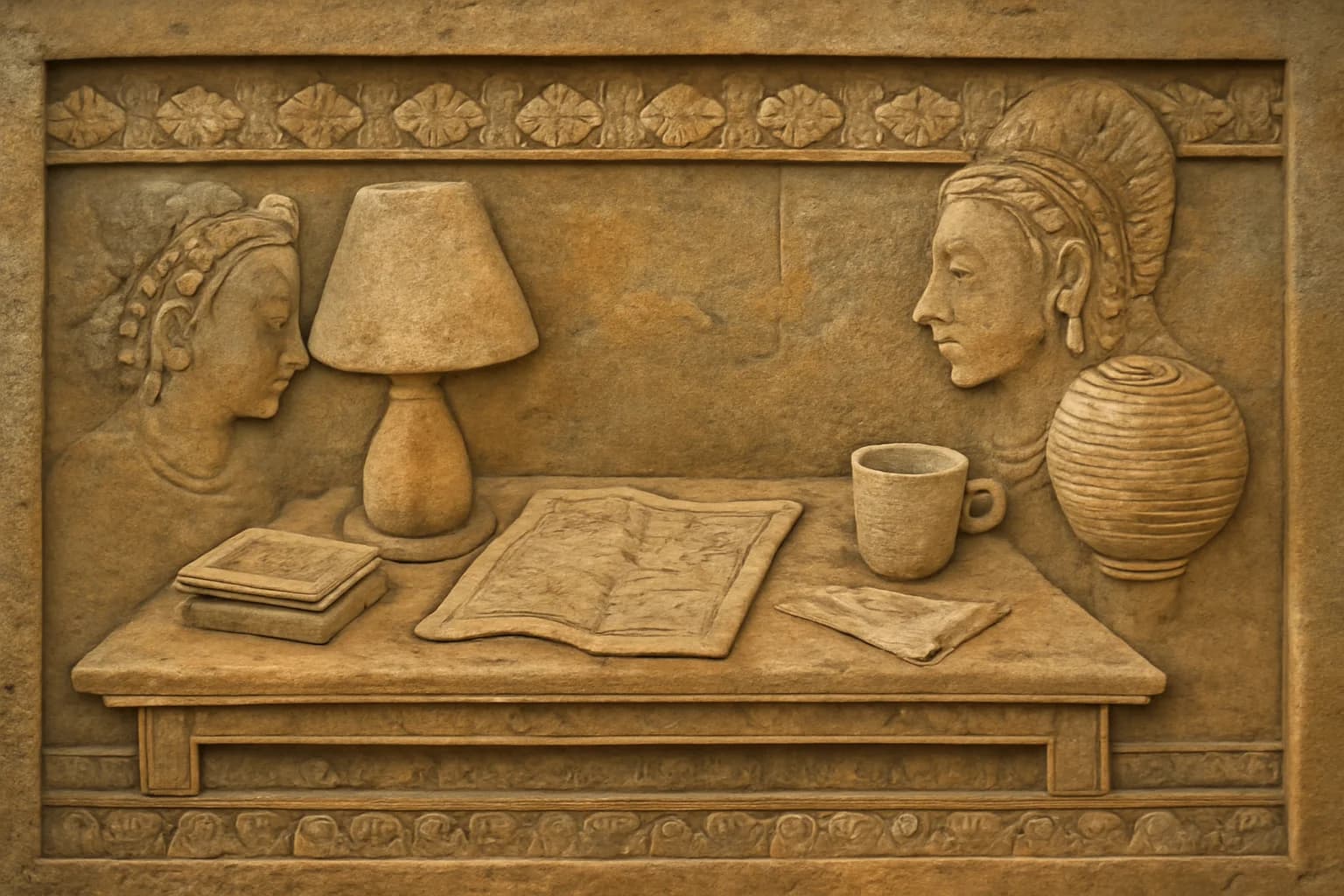

angkor-relief · every object carved as sandstone bas-relief, figures around the lamp.

khmer-lacquer · deep black lacquer, gold leaf linework across the whole scene.

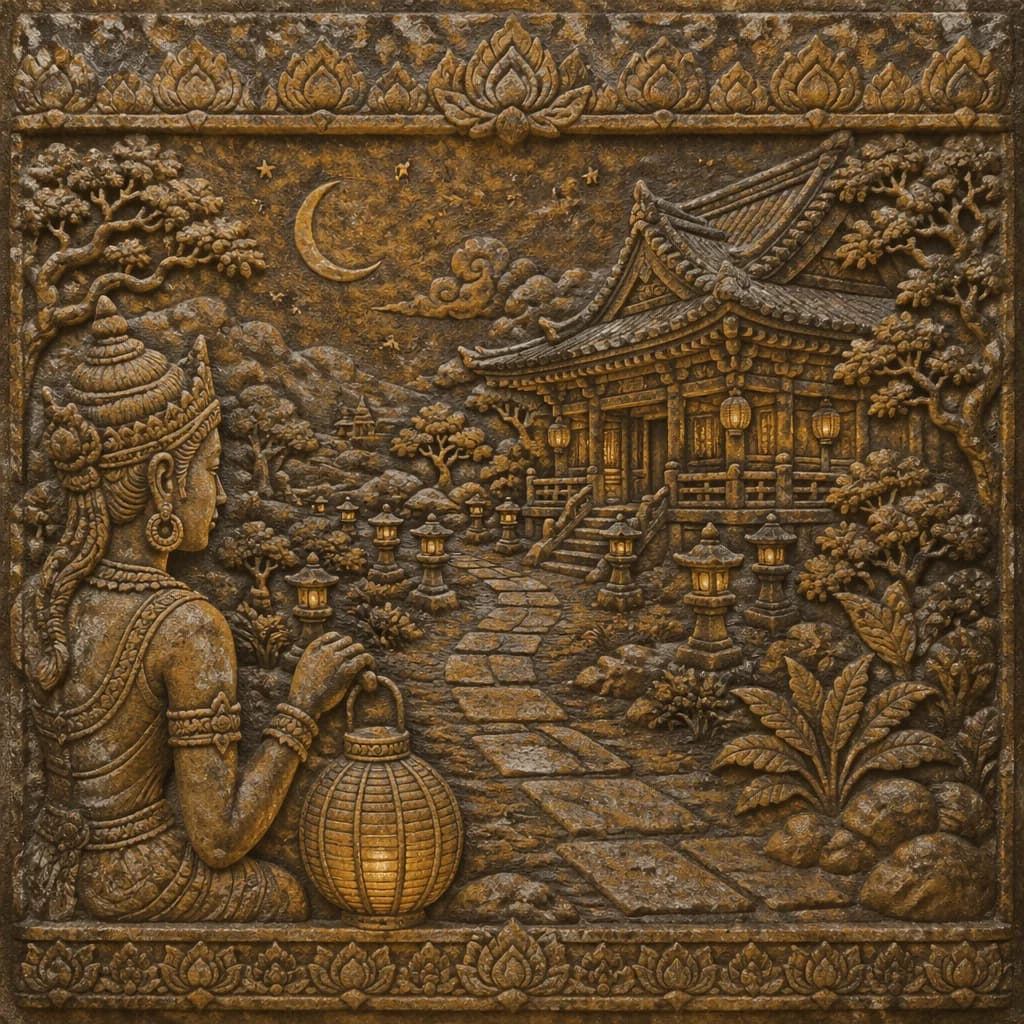

sbek-thom · perforated leather silhouette, firelight behind.

khmer-ikat · the whole image as woven thread, laptop screen included.

Four for four. Direct synthesis, zero fallback. Receipt: $0.072, 21.7 seconds. One sip of coffee.

That told me what I needed. gpt-image-2 low is in the mini bucket on the medium axis. Which makes sense. They're both trained on OpenAI's family of data, after all. The noun pre-qualification rewrites I'd built for Imagen are still Imagen-specific. I can scale up.

scaling the probe to 88 images for $1.15

I ran the full Khmer corpus, then the brand-kit heroes, then the rest of the brand kit. Here's the tally:

| Run | Images | Hold rate | Cost |

|---|---|---|---|

| Khmer diagnostic (4 heroes) | 4 | 4/4 | $0.072 |

| Khmer full set | 28 | 28/28 | $0.360 |

| Brand-kit heroes scout | 8 | 8/8 | $0.144 |

| Brand-kit non-heroes | 48 | 48/48 | $0.576 |

| Total | 88 | 88/88 | $1.152 |

No regens. No rewrites. No role negation for logo-marks, no noun pre-qualification for exotic mediums, no "not a mockup" negatives. The canonical SALT prompts from Parts 1 and 2, unmodified, rendered cleanly across every cell.

For cross-provider context: gpt-image-1-mini low did the same brand kit at $0.28. Imagen 4 Fast did it at $2.24, and only after four-cell regens with role negation added. gpt-image-2 low came in at $0.72 for the same 56-image self-portrait: 2.5× mini, 0.3× Imagen, and first-try clean.

If you only cared about the bucket test, you could stop here. The rule held. The methodology compounds.

composition-forward interpretation: the bonus finding

Somewhere in the brand-kit run I opened khmer-ikat/social-card expecting the usual ikat failure mode: decline-to-render the humans in woven thread, or a photograph of fabric with small figures sewn on it. What came back was two thread-rendered figures leaning over maps under a thread-rendered pendant lamp, their faces woven, their clothing folds woven, the map on the desk woven. The whole tableau committed.

I nodded in satisfaction. It didn't feel like something a low-tier model should have made.

Mini usually doesn't commit to human figures in the ikat medium. Imagen needs the weave-aware rewrites and then still hedges. gpt-image-2 low just made the picture.

Then I started noticing it in other cells. angkor-relief/destination-card came back with a carved devata figure gazing across the panel at the temple, a figure not in the prompt but consistent with how Angkor bas-reliefs actually compose. cinematic-wide/hero staged three layers unprompted: a foreground desk, a midground plant, a background window framing a city skyline. The Style clause had asked for "deep staging" as a descriptor; the model read it as a directive and built the layers itself.

The model was composing forward from the medium instead of only obeying the scene. It treats the medium prompt as license to author. It reaches for the medium's native subject vocabulary (devatas in bas-relief, paired figures under a lamp in woven textile, deep staging in cinematic-wide) and commits to it even when the Scene clause didn't request it.

That's distinct from the cultural-memory finding in Part 2, where the Khmer training distribution pulled figures into stone reliefs unbidden. That was about the medium carrying inheritance. This is about the model having a stronger compositional hand across all mediums, not only on culturally dense ones. The cinematic-wide example is the tell. No cultural substrate, just a style descriptor, and the model still authored a three-layer frame.

pricing honestly

gpt-image-2 low runs $0.012 at 1024×1024 and $0.018 at 1536×1024. For the math on a full self-portrait:

gpt-image-1-mini low: $0.28 for 56 images.gpt-image-2 low: $0.72 for 56 images.Imagen 4 Fast(v1 prompts + regens): $2.24 for 56 images.

If you care about the absolute floor on cost and your prompts tolerate the slightly weaker composition, mini still wins on price. If you care about zero-regen, first-try, commercial-ready output, gpt-image-2 low is the cheapest way there. It's 2.5× the mini price, and it delivers 56/56 on prompts that needed four regens on Imagen at 3× the cost.

Here's how I think about the 2.5× premium: it buys fewer rewrites, fewer regens, and a model that commits to compositions mini politely declines. The extra cost is paying for first-try reliability. If you want sharper faces or better fabric detail, you're going up the tier ladder anyway. That's a different conversation.

caveats I'm not hiding

Three things the run didn't paper over.

Japanese-motif drift on the heroes. The Scene clause in my base matrix says "a Japanese paper lantern." On mini and Imagen that wording is aesthetic flavor. On gpt-image-2, "Japanese" reads as a strong cultural anchor and imports adjacent motifs: maneki-neko, daruma figures, Mt. Fuji silhouettes on lanterns, Japanese pagodas. The drift concentrates on the 1536×1024 heroes and subsides at 1024×1024 non-heroes. Workaround: rename "Japanese paper lantern" to "round paper lantern" and keep the visual signature. I haven't run that workaround at full scale yet.

Ikat renders as cross-stitch, not resist-dye. Same medium-identification precision issue mini showed. The texture is convincingly woven fabric; it's just the wrong woven fabric. True ikat has a characteristic feathered edge where resist-dyed thread meets undyed thread, and neither mini nor gpt-image-2 produces that without explicit weave-technique pre-qualification. Cheap to test at $0.012, haven't done it yet.

cell-shaded/hero refused to go full flat-poster. The Style clause asked for bold linework and flat cel shading, and the model handed back something closer to editorial illustration. Still medium-committed, just not as flat as the directive requested. My read: composition-forward interpretation can fight literal style compliance. When the model wants to make a scene, it won't flatten the scene into a poster just because the prompt said "flat."

are we sleeping on gpt-image-2?

low tiers get dismissed on reflex. When a new model ships, the press cycle goes to the top model. That's where the benchmark-clearing demos live, and that's what trends. The cheap tier is the afterthought in the announcement and usually the last thing anyone tests.

Prompt portability is a capability. Maybe the capability, once you're doing cross-provider work at any scale. You learn how much it matters the hard way, a batch at a time, when your prompts don't transfer to the new model.

For the kind of work I've been doing here (editorial image generation across providers on a fixed prompt corpus), gpt-image-2 low is the strongest result of this series so far. 88 out of 88 on prompts written for two different models, no rewrites, $1.15 total. The top tier might clear higher benchmarks. low is what lets the corpus survive.

what the cheap tier teaches me

Cost reduction was supposed to be about shipping more. In Part 1 it turned out to be about matching model tiers to surfaces. In Part 2 it was about removing the friction that used to schedule the should I question.

This run gave me a third answer. Cost reduction lets you probe faster. The bucket test on gpt-image-2 was $0.072 and 22 seconds: a single hypothesis check cheap enough to run in the time it takes to decide whether to run it.

The prompt corpus is the thing that keeps paying off. Every new model slots in cheaper than the last one because the prompts are already written, graded, and failure-mode-catalogued. The corpus took real time to build. Each new model it validates against is nearly free.

close

The scene-as-medium grammar I worked out in Part 2 ported cleanly to a model I hadn't seen when I wrote that post. That is when I start trusting the rule. If a framework only holds on the model it was derived from, it's a tuning pass. If it holds on a model you hadn't tested yet, it's a description of something structural.

The next post in this series is Cappie, the judge. Once you have three full self-portraits across three providers on identical prompts, the question stops being which model. It starts being how do you evaluate something when the machines disagree and the disagreement is the useful signal. Scoring isn't enough anymore. You need evaluation that explains.

The cross-provider exhibit is up. Same prompt, three providers, three distinct renders. If you want to see composition-forward interpretation at full size, the khmer-ikat/social-card panel is where the bucket differences get loudest.